- Blog

- What does eq stand for

- Nba live 2003 patch download

- Ovation applause electric guitar

- Mind control stephen marley vinyl

- Hiren boot cd 11

- Gideon ai ygopro

- What is dell sonicwall netextender

- Eboostr full crack

- Brotha lynch hung discography download

- Best cartoon making software

- The immortals of meluha shiva trilogy book 1 pdf

- How to test the homogeneity of slopes by spss version 25

- How often are hurricane tracks accurate 5 days out

- Canon printer pixma mg3520 driver

- Simplify3d 4-0-1 download

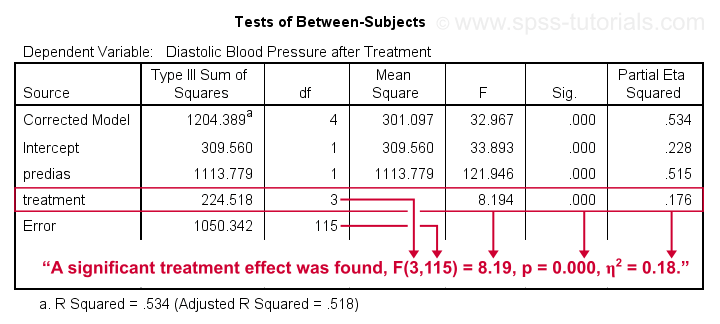

To calculate Type III sums of squares properly we must specify orthogonal contrasts. A wrong way, without setting contrasts # get type I sums of squares viagraModel.2 F) If we want Type I sums of squares, then we enter covariate(s) first, and the independent variable(s) second. This result means that it is appropriate to use partner’s libido as a covariate in the analysis.

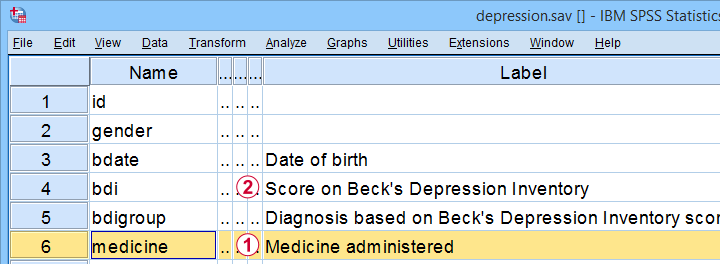

In other words, the means for partner’s libido are not significantly different in the placebo, low- and high-dose groups. 16, which shows that the average level of partner’s libido was roughly the same in the three Viagra groups. The main effect of dose is not significant, F(2, 27) = 1.98, p =. Check that the covariate and any independent variables are independent Self-test 3Ĭonduct an ANOVA to test whether partner’s libido (our covariate) is independent of the dose of Viagra (our independent variable). This means that for these data the variances are very similar. The output shows that Levene’s test is very non-significant, F(2, 27) = 0.33, p =. If you have violated the assumption of homogeneity of regression slopes, or if the variability in regression slopes is an interesting hypothesis in itself, then you can explicitly model this variation using multilevel linear models (see Chapter 19). Homogeneity of regression slopesįor example, if there’s a positive relationship between the covariate and the outcome in one group, we assume that there is a positive relationship in all of the other groups too. You might think that by adding depression as a covariate into the analysis you can look at the ‘pure’ effect of anxiety, but you can’t. Independence of the covariate and treatment effectįor example, anxiety and depression are closely correlated (anxious people tend to be depressed) so if you wanted to compare an anxious group of people against a non-anxious group on some task, the chances are that the anxious group would also be more depressed than the non-anxious group. It is a part of my another post Assumptions of statistics methods. Once a possible confounding variable has been identified, it can be measured and entered into the analysis as a covariate. If any variables are known to influence the dependent variable being measured, then ANCOVA is ideally suited to remove the bias of these variables.

Elimination of confounds: In any experiment, there may be unmeasured variables that confound the results (i.e., variables other than the experimental manipulation that affect the outcome variable).To reduce within-group error variance: If we can explain some of the ‘unexplained’ variance ($ SS_R $) in terms of other variables (covariates), then we reduce the error variance, allowing us to more accurately assess the effect of the independent variable ($ SS_M $).There are two reasons for including covariates: Covariates mean continuous variables that are not part of the main experimental manipulation but have an influence on the dependent variable. Use Anova() to test homogeneity of regression slopesĪNCOVA extends ANOVA by including covariates into the analysis.Check for homogeneity of regression slopes A correct way, with setting orthogonal contrasts.Check that the covariate and any independent variables are independent Independence of the covariate and treatment effect.

#How to test the homogeneity of slopes by spss version 25 code

Most code and text are directly copied from the book.

This post covers my notes of ANCOVA methods using R from the book “Discovering Statistics using R (2012)” by Andy Field.

- Blog

- What does eq stand for

- Nba live 2003 patch download

- Ovation applause electric guitar

- Mind control stephen marley vinyl

- Hiren boot cd 11

- Gideon ai ygopro

- What is dell sonicwall netextender

- Eboostr full crack

- Brotha lynch hung discography download

- Best cartoon making software

- The immortals of meluha shiva trilogy book 1 pdf

- How to test the homogeneity of slopes by spss version 25

- How often are hurricane tracks accurate 5 days out

- Canon printer pixma mg3520 driver

- Simplify3d 4-0-1 download